A single contaminated batch can trigger recalls costing millions of dollars, invite regulatory scrutiny, and permanently damage the brand reputation you have spent years building. In the United States alone, the economic burden of foodborne illness is estimated at $74.7 billion annually, while the average food recall costs a company roughly $10 million in direct expenses.

For QA/QC managers, food testing is not just a regulatory checkbox. It is the frontline defense that protects public health, preserves supply chain integrity, and safeguards your bottom line.

Whether you manage a meat processing facility, a dairy plant, or a multi-category food operation, the testing methods you choose directly influence how quickly you can release product. They also determine how confidently you pass audits and how resilient your operation is against contamination events.

This guide walks you through the core categories of food testing methods, advanced diagnostic techniques, and method validation essentials. You’ll learn the best practices for building a monitoring strategy that keeps your facility ahead of both regulators and contaminants.

What Is Food Safety Testing and Why Does It Matter for Manufacturers?

From a commercial standpoint, food safety testing encompasses the scientific evaluation of raw materials, in-process products, finished goods, and production environments to detect biological, chemical, and physical hazards before they reach the consumer. It goes beyond public health compliance to directly influence supply chain reliability, shelf-life accuracy, and brand credibility.

When implemented as a strategic function rather than a reactive formality, food safety testing delivers measurable business value:

- Preventing costly product recalls: Hospitalizations linked to recalled food products more than doubled in 2024, rising from 230 to 487 compared to 2023. Catching contamination before distribution protects you from these cascading costs.

- Meeting strict global regulatory requirements: Whether your facility operates under FDA/FSMA rules, GFSI-benchmarked schemes, or ISO 17025 accreditation standards, documented testing programs are your evidence of due diligence during audits. For a comprehensive overview of these frameworks, explore this guide to food safety standards.

- Validating shelf-life and ensuring consistent product quality: Testing confirms that your products meet specifications for microbial limits, chemical composition, and sensory attributes throughout their intended shelf life. This consistency builds the kind of consumer trust that drives repeat purchases and long-term retail partnerships.

The Core Categories of Food Testing Methods

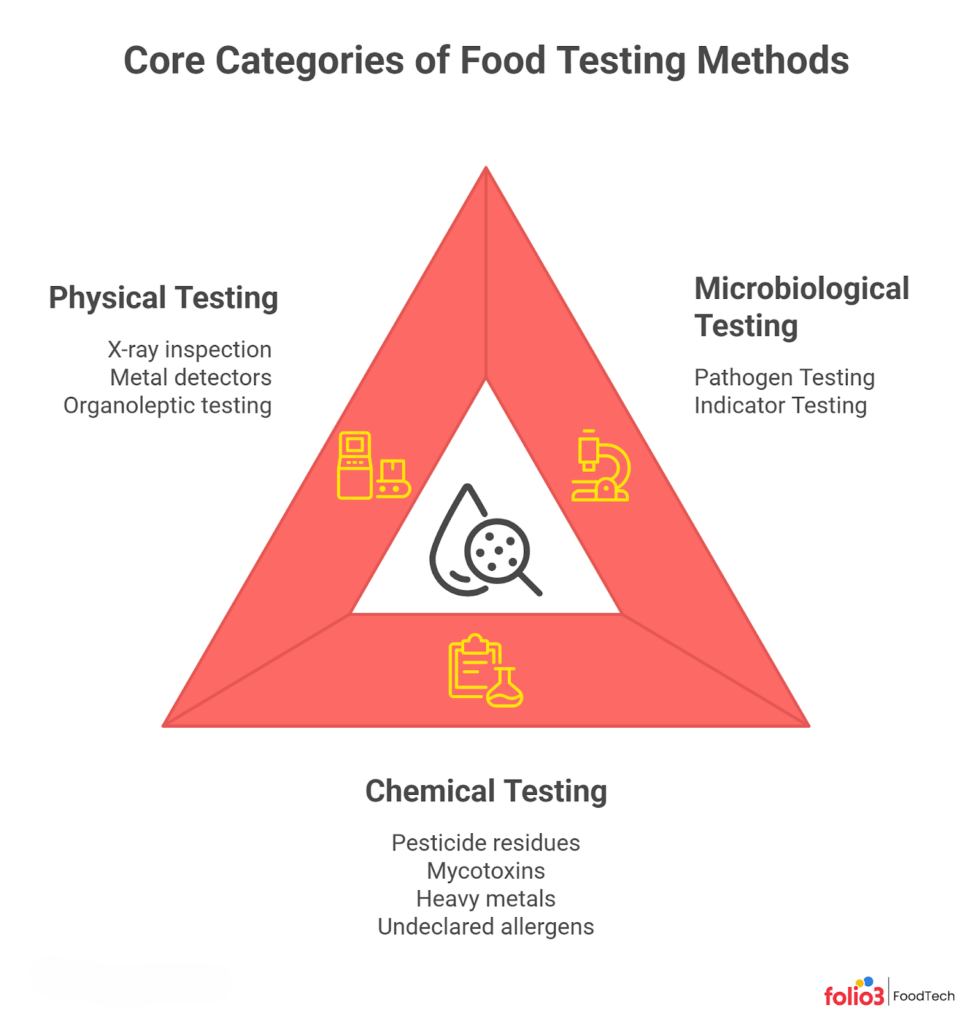

Every food testing program rests on three pillars: microbiological, chemical, and physical testing. Understanding how each works helps you build a defense that covers all hazard types.

Microbiological Food Testing Methods

Microbiological testing is the backbone of any food safety program because biological hazards, primarily bacteria, viruses, and parasites, are the leading drivers of foodborne illness outbreaks. Your testing program needs to distinguish between two critical categories: pathogen testing and indicator organism testing.

Pathogen Testing: Pathogen testing targets specific dangerous organisms such as Salmonella, Listeria monocytogenes, E. coli O157:H7, and Campylobacter. These are the organisms directly responsible for illness and the ones regulators are most concerned about. For a deeper look at building an effective detection program, refer to this guide on food pathogen testing best practices.

Indicator Testing: Indicator organism testing, on the other hand, measures general microbial populations using metrics like Aerobic Plate Count (APC), coliforms, and Enterobacteriaceae. These organisms are not necessarily harmful themselves, but elevated counts signal that your sanitation or process controls may be failing, essentially functioning as an early warning system.

Chemical and Analytical Testing

Chemical hazards are often invisible and odorless, which makes analytical testing essential for catching what your eyes, nose, and microbial cultures cannot. This category of food quality testing methods covers a broad spectrum of contaminants, each posing distinct risks to consumer health and regulatory compliance.

Your chemical testing program should target the following hazard groups:

- Pesticide residues: Particularly critical for fresh produce and imported commodities, where different countries apply varying maximum residue limits (MRLs).

- Mycotoxins: Naturally occurring toxins produced by molds (such as aflatoxins in grains and nuts) that can contaminate crops during growth, harvest, or storage.

- Heavy metals: Lead, cadmium, mercury, and arsenic can accumulate in food through contaminated soil, water, or industrial processes. Recalls due to excessive lead contamination rose sharply in 2024, with 13 separate recall events recorded.

- Undeclared allergens: Allergen cross-contact is the single biggest driver of food recalls. In 2024, undeclared allergens accounted for 101 recalls, representing 34% of all FDA recall events.

Beyond hazard detection, chemical testing also supports nutritional labeling compliance. Analysis of macronutrients (protein, fat, carbohydrates), vitamins, and minerals ensures that your nutrition facts panel is accurate. It is a regulatory requirement under FDA labeling rules and increasingly scrutinized by consumers making health-conscious purchasing decisions.

Physical and Sensory Testing

Physical contaminants, such as glass shards, metal fragments, plastic pieces, wood splinters, and bone chips, pose an immediate injury risk to consumers and are among the most visible causes of complaints and recalls. Identifying these hazards requires in-line detection equipment integrated directly into your production process.

- X-ray inspection systems are the most versatile option, capable of detecting metal, glass, stone, bone, and dense plastics regardless of packaging material.

- Metal detectors remain cost-effective for facilities where metal contamination is the primary concern. Sieving and filtration systems add another layer of defense for powdered or liquid products.

- Sensory or organoleptic testing evaluates attributes like taste, smell, texture, color, and appearance. While less quantitative than laboratory methods, trained sensory panels provide a consumer-centric assessment that catches quality deviations, off-flavors, or texture changes that instruments might miss.

The key is selecting the right equipment for your product matrix and calibrating it daily to maintain sensitivity. A strong food quality assurance program integrates both instrumental and sensory evaluations to provide a complete quality picture.

Advanced Food Safety Diagnostics and Monitoring Techniques

The global food safety testing market was valued at approximately $26 billion in 2025 and is projected to grow at a CAGR of roughly 7.8% through 2033. Much of this growth is driven by the adoption of advanced diagnostics that deliver faster results with higher specificity than traditional methods. If your testing program still relies exclusively on culture-based methods, you may be leaving speed and precision on the table.

PCR and DNA-Based Testing

PCR (Polymerase Chain Reaction) has become the workhorse of rapid pathogen detection in modern food safety laboratories. The technology works by amplifying specific DNA sequences unique to target pathogens. Through repeated heating and cooling cycles, a single DNA fragment is multiplied into millions of copies that can be detected via fluorescence in real-time (quantitative PCR or qPCR).

What makes PCR invaluable for QA/QC operations is its combination of speed, sensitivity, and specificity:

Speed: Most commercial PCR platforms deliver confirmed pathogen results within 4 to 24 hours, compared to the 2 to 5 days typically required for traditional culture confirmation. For perishable goods with tight distribution windows, this time savings can mean the difference between timely market release and costly inventory holds.

Sensitivity: PCR can detect as few as 1 to 10 colony-forming units per sample after enrichment, catching low-level contamination that culture methods might miss in heterogeneous food matrices.

Multiplexing capability: Advanced PCR panels can simultaneously screen for multiple pathogens (for instance, Salmonella, Listeria, and E. coli STEC) in a single test run, reducing both labor and consumable costs.

However, PCR does have limitations you should be aware of. It detects DNA, which means it cannot distinguish between live and dead organisms without additional processing steps. For regulatory submissions that require a viable isolate, PCR-positive results often still need culture confirmation. Most facilities use PCR as a rapid screening tool and reserve culture methods for confirmation and further characterization.

Chromatography and Mass Spectrometry

When it comes to identifying complex chemical residues at trace concentrations, chromatography coupled with mass spectrometry is the gold standard. These techniques are essential for detecting pesticides, veterinary drug residues, mycotoxins, and persistent organic pollutants (POPs) that other methods simply cannot resolve.

Liquid Chromatography-Mass Spectrometry (LC-MS): It is particularly well-suited for thermally unstable or polar compounds, including many modern pesticides, antibiotics, and mycotoxins. It works by separating compounds in a liquid phase and then identifying them by their mass-to-charge ratio.

Gas Chromatography-Mass Spectrometry (GC-MS): It excels at volatile and semi-volatile compounds, making it the method of choice for detecting certain pesticide residues, flavor compounds, and environmental contaminants.

For your facility, these methods are critical in several scenarios:

- Multi-residue screening: A single LC-MS/MS run can screen for hundreds of pesticide compounds simultaneously, giving you comprehensive coverage without running dozens of separate tests.

- Allergen quantification: While immunoassays provide rapid allergen screening, LC-MS/MS offers definitive quantification of specific allergenic proteins, which is increasingly relevant as regulators move toward establishing threshold levels.

- Authenticity verification: Chemical profiling through chromatographic methods can identify adulteration, such as the addition of cheaper oils to olive oil or the substitution of lower-grade ingredients, supporting your food fraud prevention efforts.

The main trade-off is cost. LC-MS and GC-MS instruments represent significant capital investments, and they require trained analysts to operate and interpret results. Many manufacturers outsource this testing to accredited third-party laboratories while keeping faster screening methods in-house.

Whole Genome Sequencing (WGS)

Whole Genome Sequencing represents the most advanced tool in the food safety diagnostics arsenal. Rather than identifying a pathogen by a single gene or surface marker, WGS reads the entire genetic code of an organism, providing a level of resolution that no other method can match.

For food manufacturers, WGS is a powerful tool in three key areas:

Outbreak source-tracking: When a foodborne illness cluster is identified, public health agencies like the CDC and FDA use WGS to match patient isolates with environmental or product isolates at the genomic level. It means contamination can be traced back to a specific production line, ingredient lot, or supplier with extraordinary precision.

Food authenticity and fraud prevention: WGS can verify species identity in complex products such as seafood, meat blends, and herbal supplements. It can also detect economically motivated adulteration, such as substituting a cheaper fish species for a premium one.

Antimicrobial resistance (AMR) profiling: WGS can identify resistance genes in foodborne isolates, helping your facility understand whether organisms in your supply chain carry resistance markers that could complicate treatment of human infections.

While WGS is not yet a routine in-house test for most food manufacturers due to equipment costs and bioinformatics requirements, its use in regulatory investigations means that the organisms found in your facility could be characterized at this level. That makes it even more critical to maintain strong preventive measures for food safety and robust environmental monitoring programs.

Method Validation, Verification, and Fitness for Purpose

Choosing the right test is only half the equation. Proving that your test works correctly in your specific laboratory and with your specific product matrices is what separates a compliant operation from one that is vulnerable to audit findings.

Method Validation

Method validation is the process by which a testing method is scientifically demonstrated to be reliable, accurate, and reproducible for its intended purpose. This is typically performed by standard-setting bodies such as the AOAC International or ISO (through standards like ISO 16140) using multi-laboratory collaborative studies.

When a method receives AOAC Official Method status or ISO validation, it means the method has been tested across multiple laboratories, sample types, and conditions, and has consistently delivered reliable results. For your facility, using validated methods provides regulatory confidence and defensible data during audits or investigations.

Method Verification

While validation proves a method works globally, verification proves that your specific laboratory can execute the validated method correctly. Verification involves demonstrating that your lab, using its own equipment, reagents, and trained analysts, can achieve the performance parameters established during validation. It is a requirement under ISO 17025 accreditation and is increasingly expected by GFSI-benchmarked certification schemes.

Skipping verification is one of the most common audit non-conformances, so do not assume that purchasing a validated test kit automatically means your results are reliable. You need documented evidence that your team can run it correctly in your environment. For more on meeting these accreditation requirements, review the food and beverage certifications guide.

Fitness for Purpose

This is where many QA/QC managers encounter unexpected failures. A method can be fully validated and properly verified, yet still produce unreliable results when applied to a product matrix it was not designed for. It is known as the matrix effect.

For example, a rapid immunoassay validated for detecting allergens in dairy products may produce false negatives when applied to highly acidic fruit juices because the low pH degrades the target proteins. Similarly, a PCR method validated in lean ground beef might underperform in high-fat peanut butter because the fat interferes with DNA extraction efficiency.

Before deploying any method on a new product type, you need to conduct matrix-specific spiking studies to confirm that the method performs adequately in that particular food environment. Documenting fitness for purpose is your strongest defense if test results are ever challenged during a regulatory investigation or customer audit.

Traditional vs. Rapid Food Testing Methods

Selecting the right testing methodology depends on your product type, turnaround requirements, and regulatory expectations. This comparison helps you evaluate your options at a glance.

| Methodology Type | Turnaround Time | Cost | Accuracy / Specificity | Best Use Case |

| Traditional Culture Methods (Agar) | 2–5 days | Low per test | Gold standard; definitive confirmation | Regulatory confirmation; shelf-stable products |

| Immunoassays (ELISA) | 2–4 hours (after enrichment) | Moderate | High sensitivity; some cross-reactivity risk | Allergen screening; mycotoxin detection |

| Molecular Methods (PCR) | 4–24 hours | Moderate–High | Very high specificity; detects live and dead DNA | Rapid pathogen screening; perishable goods |

| Analytical Chemistry (LC-MS/GC-MS) | 1–3 days | High | Exceptional; quantitative at trace levels | Chemical residues; pesticides; food fraud |

In practice, the choice between rapid and traditional methods is rarely either-or. Most effective QA/QC programs use a tiered approach. Rapid methods like PCR and ELISA handle day-to-day screening, enabling faster product release decisions for perishable goods that cannot wait 3 to 5 days for culture results. Traditional culture methods serve as the confirmation layer when rapid screens flag a positive, and they remain the standard for regulatory submissions.

Your product type matters too. If you manufacture shelf-stable canned goods with a 12-month shelf life, the extra day or two for culture results is a manageable wait. But if you are processing fresh-cut salads or ready-to-eat deli meats with 7-to-14-day shelf lives, rapid testing becomes operationally essential. Conducting a thorough food safety risk assessment will help you determine the right balance of methods for your specific product lines and hazard profiles.

Best Practices for a Robust Food Safety Monitoring Strategy

Testing individual product batches is necessary but insufficient. A truly robust food safety monitoring strategy extends across your facility environment, your laboratory systems, and your data infrastructure.

Implement Environmental Monitoring Programs (EMP)

An Environmental Monitoring Program shifts your testing approach from reactive to proactive. An effective EMP involves systematic swabbing of food-contact surfaces, non-food-contact surfaces, drains, floors, walls, condensation points, and air handling systems on a defined schedule.

The goal is to identify harborage sites, areas where pathogens like Listeria can establish persistent colonies that repeatedly contaminate product. Trend analysis of your EMP data over time reveals whether your sanitation programs are actually working or whether certain zones consistently test positive. Integrating your EMP data with a digital facility environment monitoring system allows you to spot these trends in real time rather than discovering them during a third-party audit.

Data Acceptance and ISO 17025 Accreditation

The reliability of your food safety data is only as strong as the laboratory producing it. Whether you operate an in-house lab or send samples to a third-party contract laboratory, verifying that the lab holds ISO 17025 accreditation is non-negotiable for critical testing. ISO 17025 establishes the competence, impartiality, and consistency requirements for testing and calibration laboratories.

When evaluating third-party labs, look beyond the certificate. Confirm that the lab is accredited specifically for the test methods and food matrices relevant to your products. A lab accredited for Salmonella testing in poultry is not automatically qualified to test for the same organism in spices or produce. Understanding the scope of accreditation is essential for ensuring your data will hold up under regulatory scrutiny. It is a principle that applies equally to FSMA compliance and GFSI certification audits.

Digitize with Foodtech

If your facility still relies on paper logbooks, manual spreadsheets, or disconnected systems to track testing results, corrective actions, and audit documentation, you are introducing unnecessary risk into your operation. Paper-based systems are prone to transcription errors, difficult to search during time-sensitive investigations, and nearly impossible to analyze for trends at scale.

Transitioning to a digital Food Safety Management System (FSMS) consolidates your testing data. It includes environmental monitoring records, CAPA documentation, and supplier verification files in a single, searchable platform.

The benefits are immediate and tangible:

- Real-time traceability when a customer or regulator asks you to trace a lot

- Automated alerts when test results breach your critical limits

- Seamless audit readiness that eliminates the last-minute scramble to compile records.

For manufacturers looking to take this even further, AI-driven tools in the food and beverage industry are now enabling predictive analytics. They can flag potential contamination risks before they materialize, moving your food safety program from reactive to truly predictive.

Future-Proofing Your Food Testing Protocols

Comprehensive food testing is not a cost center; it is a competitive advantage. The manufacturers who invest in validated testing methods and digitize their monitoring workflows are the ones who release products faster, pass audits with confidence, and recover from supply chain disruptions more efficiently.

Start by evaluating your current testing methods for fitness for purpose across every product matrix you handle. Identify where rapid methods can accelerate your release cycles. Plus, invest in digital tools, such as a comprehensive food traceability system, that turn testing data into actionable intelligence.

The question is not whether your food testing program is good enough for today. It is whether it is ready for the regulatory, consumer, and technological expectations of tomorrow. If you are ready to evaluate and strengthen your testing protocols, consult with foodtech experts who understand the intersection of food science, compliance, and technology.

FAQs

What Is the Difference Between Food Safety Testing and Food Quality Testing?

Food safety testing focuses specifically on detecting hazards that could cause illness, such as pathogens, chemical residues, and physical contaminants. Food quality testing evaluates broader product attributes like taste, texture, color, and nutritional accuracy. Both are essential, but safety testing is non-negotiable from a regulatory perspective.

How Often Should a Food Manufacturing Facility Conduct Microbiological Testing?

Testing frequency depends on your product risk profile, regulatory requirements, and production volume. High-risk products like ready-to-eat meats and fresh-cut produce typically require per-lot or daily testing, while lower-risk shelf-stable products may follow a statistically validated sampling plan. Your HACCP plan should define the minimum frequency for each critical control point.

Can Rapid Testing Methods Fully Replace Traditional Culture Methods?

Not yet. Rapid methods like PCR and ELISA are excellent for screening, but most regulatory frameworks still require culture-based confirmation for positive results. The most effective programs use rapid methods for day-to-day screening and reserve culture methods for confirmation and strain typing when needed.

What Should QA Managers Look for When Selecting a Third-Party Testing Laboratory?

Prioritize ISO 17025 accreditation with a scope that covers the specific test methods and food matrices relevant to your products. Also evaluate turnaround time reliability, data reporting formats, chain-of-custody procedures, and whether the lab participates in proficiency testing programs to demonstrate ongoing competence.

How Does FSMA 204 Affect Food Testing and Traceability Requirements?

FSMA Section 204 introduces enhanced recordkeeping requirements for foods on the Food Traceability List, requiring facilities to maintain Key Data Elements at Critical Tracking Events throughout the supply chain. While it does not mandate specific testing methods, it makes robust traceability in the food industry essential for connecting testing data to specific lots, suppliers, and distribution pathways.